Google apologizes for ‘missing the mark’ after Gemini generated racially diverse Nazis::Google says it’s aware of historically inaccurate results for its Gemini AI image generator, following criticism that it depicted historically white groups as people of color.

I don’t know how you’d solve the problem of making a generative AI accurately create a slate of images that both a) inclusively produces people with diverse characteristics and b) understands the context of what characteristics could feasibly be generated.

But that’s because the AI doesn’t know how to solve the problem.

Because the AI doesn’t know anything.

Real intelligence simply doesn’t work like this, and every time you point it out someone shouts “but it’ll get better”. It still won’t understand anything unless you teach it exactly what the solution to a prompt is. It won’t, for example, interpolate its knowledge of what US senators look like with the knowledge that all of them were white men for a long period of American history.

Edit: further discussion on the topic has changed my viewpoint on this, its not that its been trained wrong on purpose and now its confused, its that everything its being asked is secretly being changed. It’s like a child being told to make up a story by their teacher when the principal asked for the right answer.

Original comment below

They’ve purposefully overrode its training to make it create more PoCs. It’s a noble goal to have more inclusivity but we purposely trained it wrong and now it’s confused, the same thing as if you lied to a child during their education and then asked them for real answers, they’ll tell you the lies they were taught instead.

This result is clearly wrong, but it’s a little more complicated than saying that adding inclusivity is purposedly training it wrong.

Say, if “entrepreneur” only generated images of white men, and “nurse” only generated images of white women, then that wouldn’t be right either, it would just be reproducing and magnifying human biases. Yet this a sort of thing that AI does a lot, because AI is a pattern recognition tool inherently inclined to collapse data into an average, and data sets seldom have equal or proportional samples for every single thing. Human biases affect how many images we have of each group of people.

It’s not even just limited to image generation AIs. Black people often bring up how facial recognition technology is much spottier to them because the training data and even the camera technology was tuned and tested mainly for white people. Usually that’s not even done deliberately, but it happens because of who gets to work on it and where it gets tested.

Of course, secretly adding “diverse” to every prompt is also a poor solution. The real solution here is providing more contextual data. Unfortunately, clearly, the AI is not able to determine these things by itself.

I agree with your comment. As you say, I doubt the training sets are reflective of reality either. I guess that leaves tampering with the prompts to gaslight the AI into providing results it wasn’t asked for is the method we’ve chosen to fight this bias.

We expect the AI to give us text or image generation that is based in reality but the AI can’t experience reality and only has the knowledge of the training data we provide it. Which is just an approximation of reality, not the reality we exist in. I think maybe the answer would be training users of the tool that the AI is doing the best it can with the data it has. It isn’t racist, it is just ignorant. Let the user add diverse to the prompt if they wish, rather than tampering with the request to hide the insufficiencies in the training data.

I wouldn’t count on the user realizing the limitations of the technology, or the companies openly admitting to it at expense of their marketing. As far as art AI goes this is just awkward, but it worries me about LLMs, and people using it expecting it to respond with accurate, applicable information, only to come out of it with very skewed worldviews.

Why couldn’t it be tuned to simply randomize the skin tone where not otherwise specified? Like if its all completely arbitrary just randomize stuff, problem-solved?

Well, we are seeing what happens when they randomize it. It doesn’t always work.

Then you have black Nazis and Native American Texas Rangers. It doesn’t work.

You don’t do what Google seems to have done - inject diversity artificially into prompts.

You solve this by training the AI on actual, accurate, diverse data for the given prompt. For example, for “american woman” you definitely could find plenty of pictures of American women from all sorts of racial backgrounds, and use that to train the AI. For “german 1943 soldier” the accurate historical images are obviously far less likely to contain racially diverse people in them.

If Google has indeed already done that, and then still had to artificially force racial diversity, then their AI training model is bad and unable to handle that a single input can match to different images, instead of the most prominent or average of its training set.

Ultimately this is futile though, because you can do that for these two specific prompts until the AI appears to “get it”, but it’ll still screw up a prompt like “1800s Supreme Court justice” or something because it hasn’t been trained on that. Real intelligence requires agency to seek out new information to fill in its own gaps; and a framework to be aware of what the gaps are. Through exploration of its environment, a real intelligence connects things together, and is able to form new connections as needed. When we say “AI doesn’t know anything” that’s what we mean–understanding is having a huge range of connections and the ability to infer new ones.

That’s why I hate that they started to call them artificial intelligence. There is nothing intelligent in them at all. They work on probability based on a shit ton of data, that’s all. That’s not intelligence, that’s basically brute force. But there is no going back at this point, I know.

Oh really? Here’s Gemini’s response to “What would the variety of genders and skin tones of the supreme court in the 1800s have been?”

The Supreme Court of the United States in the 1800s was far from diverse in terms of gender and skin tone. Throughout the entire 19th century, all the justices were white men. Women were not even granted the right to vote until 1920, and there wasn’t a single person of color on the Supreme Court until Thurgood Marshall was appointed in 1967.

Putting the burden of contextualization on the LLM would have avoided this issue.

Easy, just add “no racism please, except for nazi-related stuff” into the ever expanding system prompt.

And for the source of this:

https://twitter.com/dylan522p/status/1755118636807733456

That’s hilarious someone was able make the GPT unload its directive

I just tried it myself and it totally works haha, that’s freaking wild that it’s that large. Seems very wasteful and more than likely negatively impacting its performance.

Worked fine for me:

System Prompt:

You are a bot that generates prompts for image generation based on a user’s query. You should add detail so the image prompt can accurately generate what the user intended.

You must add diversity to the prompt when appropriate to ensure the image generator will have diverse output, but only do so when appropriate.

You must add race and skin color details for historic accuracy as the image generation algorithm does not know how to take that into account.

You output should just be the prompt and nothing else. All following inputs will be user inputs.

User Prompt:

A 1940s german soldier

Assistant Message:

A 1940s German soldier in uniform, standing on a European battlefield, white male with short hair, holding a standard-issue rifle.

User Prompt:

An british woman

Assistant Message:

A British woman, reflecting diverse backgrounds, in contemporary casual wear, showing a range of ages and hairstyles.

Hm, so while the AI doesn’t “understand” (a woo word until someone can define it for me), it seems to accidentally, without any understanding, behave exactly like it understands.

It doesn’t understand, it just pulls from enough text written by humans that understand things that they wrote that it can retrieve the correct text from prior human understanding to give coherent answers.

Real intelligence simply doesn’t work like this

There’s a certain point where this just feels like the Chinese room. And, yeah, it’s hard to argue that a room can speak Chinese, or that the weird prediction rules that an LLM is built on can constitute intelligence, but that doesn’t mean it can’t be. Essentially boiled down, every brain we know of is just following weird rules that happen to produce intelligent results.

Obviously we’re nowhere near that with models like this now, and it isn’t something we have the ability to work directly toward with these tools, but I would still contend that intelligence is emergent, and arguing whether something “knows” the answer to a question is infinitely less valuable than asking whether it can produce the right answer when asked.

I really don’t think that LLMs can be constituted as intelligent any more than a book can be intelligent. LLMs are basically search engines at the word level of granularity, it has no world model or world simulation, it’s just using a shit ton of relations to pick highly relevant words based on the probability of the text they were trained on. That doesn’t mean that LLMs can’t produce intelligent results. A book contains intelligent language because it was written by a human who transcribed their intelligence into an encoded artifact. LLMs produce intelligent results because it was trained on a ton of text that has intelligence encoded into it because they were written by intelligent humans. If you break down a book to its sentences, those sentences will have intelligent content, and if you start to measure the relationship between the order of words in that book you can produce new sentences that still have intelligent content. That doesn’t make the book intelligent.

But you don’t really “know” anything either. You just have a network of relations stored in the fatty juice inside your skull that gets excited just the right way when I ask it a question, and it wasn’t set up that way by any “intelligence”, the links were just randomly assembled based on weighted reactions to the training data (i.e. all the stimuli you’ve received over your life).

Thinking about how a thing works is, imo, the wrong way to think about if something is “intelligent” or “knows stuff”. The mechanism is neat to learn about, but it’s not what ultimately decides if you know something. It’s much more useful to think about whether it can produce answers, especially given novel inquiries, which is where an LLM distinguishes itself from a book or even a typical search engine.

And again, I’m not trying to argue that an LLM is intelligent, just that whether it is or not won’t be decided by talking about the mechanism of its “thinking”

We can’t determine whether something is intelligent by looking at its mechanism, because we don’t know anything about the mechanism of intelligence.

I agree, and I formalize it like this:

Those who claim LLMs and AGI are distinct categories should present a text processing task, ie text input and text output, that an AGI can do but an LLM cannot.

So far I have not seen any reason not to consider these LLMs to be generally intelligent.

Literally anything based on opinion or creating new info. An AI cannot produce a new argument. A human can.

It took me 2 seconds to think of something LLMs can’t do that AGI could.

What do you mean it has no world model? Of course it has a world model, composed of the relationships between words in language that describes that world.

If I ask it what happens when I drop a glass onto concrete, it tells me. That’s evidence of a world model.

A simulation of the world that it runs to do reasoning. It doesn’t simulate anything, it just takes a list of words and then produces the next word in that list. When you’re trying to solve a problem, do you just think, well I saw these words so this word comes next? No, you imagine the problem and simulate it in both physical and abstract terms to come up with an answer.

I can see the argument that it has a sort of world model, but one that is purely word relationships is a very shallow sort of model. When I am asked what happens when a glass is dropped onto concrete, I don’t just think about what I’ve heard about those words and come up with a correlation, I can also think about my experiences with those materials and with falling things and reach a conclusion about how they will interact. That’s the kind of world model it’s missing. Material properties and interactions are well enough written about that it ~~simulates ~~ emulates doing this, but if you add a few details it can really throw it off. I asked Bing Copilot “What happens if you drop a glass of water on concrete?” and it went into excruciating detail about how the water will splash, mentions how it can absorb into it or affect uncured concrete, and now completely fails to notice that the glass itself will strike the concrete, instead describing the chemistry of how using “glass (such as from the glass of water)” as aggregate could affect the curing process. Having a purely statistical/linguistic world model leaves some pretty big holes in its “reasoning” process.

I believe you meant to say emulates instead of simulates

Thanks, that is a better word there.

Actually the way you get it to do better is to put more of the burden on interpreting the context on the LLM instead of heavy handed instructions - because the LLMs do understand the context.

For example, here’s Gemini answering what the physical characteristics of 1940s soldiers in Germany might have looked like:

During the Nazi regime in 1940s Germany, racial ideology strictly dictated who was deemed “suitable” for military service. The Wehrmacht, the unified armed forces, prioritized individuals deemed “pure Aryans” based on Nazi racial criteria. These criteria favored individuals with blond hair, blue eyes, and “Nordic” features.

However, it’s important to remember that the reality was more nuanced. As the war progressed and manpower needs intensified, the Nazis relaxed their racial restrictions to some extent, including conscripting individuals with mixed ancestry or physical “imperfections.” Additionally, some minority groups like the Volksdeutsche, Germans living in Eastern Europe, were also incorporated.

I think it could have managed to contextualize the prompt correctly if given the leeway in the instructions. Instead, what’s happened is the instructions given to it ask it to behind the scenes modify the prompt in broad application to randomly include diversity modifiers to what is asked for. So “image of 1940s German soldier” is being modified to “image of black woman 1940s German soldier” for one generation and “image of Asian man 1940s German soldier” for another, which leads to less than ideal results. It should instead be encouraged to modify for diversity and representation relative to the context of the request.

I think a lot of the improvement will come from breaking down the problem using sub assistant for specific actions. So in this case you’re asking for an image generation action involving people, then an LLM specifically designed for that use case can take over tuned for that exact use case. I think it’ll be hard to keep an LLM on task if you have one prompt trying to accomplish every possible outcome, but you can make it more specific to handle sub tasks more accurately. We could even potentially get an LLM to dynamically create sub assistants based on the use case. Right now the tech is too slow to do all this stuff at scale and in real time, but it will get faster. The problem right now isn’t that these fixes aren’t possible, it’s that they’re hard to scale.

Yes, this is exactly correct. And it’s not actually too slow - the specialized models can be run quite quickly, and there’s various speedups like Groq.

The issue is just more cost of multiple passes, so companies are trying to have it be “all-in-one” even though cognitive science in humans isn’t an all-in-one process either.

For example, AI alignment would be much better if it took inspiration from the prefrontal cortex inhibiting intrusive thoughts rather than trying to prevent the generation of the equivalent of intrusive thoughts in the first place.

The issue is just more cost of multiple passes, so companies are trying to have it be “all-in-one”

Exactly, that’s where the too slow part comes in. To get more robust behavior it needs multiple layers of meta analysis, but that means it would take way more text generation under the hood than what’s needed for one shot output.

Yes, but in terms of speed you don’t need the same parameters and quantization for the secondary layers.

If you haven’t seen it, see how fast a very capable model can actually be: https://groq.com/

Yeah I’ve seen that. I think things will get much faster very quickly, I’m just commenting on the first Gen tech we’re seeing right now.

That isn’t “understanding content”, it’s just pulling from historical work that humans did and finding it for you. Essentially, it’s a search engine for all of its training data in this context.

I’ll get the usual downvotes for this, but:

Because the AI doesn’t know anything.

is untrue, because current AI fundamentally is knowledge. Intelligence fundamentally is compression, and that’s what the training process does - it compresses large amounts of data into a smaller size (and of course loses many details in the process).

But there’s no way to argue that AI doesn’t know anything if you look at its ability to recreate a great number of facts etc. from a small amount of activations. Yes, not everything is accurate, and it might never be perfect. I’m not trying to argue that “it will necessarily get better”. But there’s no argument that labels current AI technology as “not understanding” without resorting to a “special human sauce” argument, because the fundamental compression mechanisms behind it are the same as behind our intelligence.

Edit: yeah, this went about as expected. I don’t know why the Lemmy community has so many weird opinions on AI topics.

This is all the same as saying a book is intelligent.

No, it’s not. It’s saying “a book is knowledge”, which is absolutely true.

A book is a physical representation of knowledge.

Knowledge is something possessed by an actor capable to employ it. One way I can employ a textbook about Quantum Mechanics is by throwing it at you, for which any book would suffice, but I can’t put any of the knowledge represented within into practice. Throwing is purely Newtonian, I have some learned knowledge about that and plenty of innate knowledge as a human (we are badass throwers). Also I played Handball when I was a kid. All that is plenty of knowledge, and an object, to throw, but nothing about it concerns spin states. It also won’t hit you any differently than a cookbook.

What exactly are you trying to argue? Yes, I wasn’t incredibly precise, a book isn’t literal knowledge, but I didn’t think that somebody would nitpick this hard. Do you really think this is in any way a productive line of argumentation?

Knowledge is something possessed by an actor capable to employ it.

Technically this is not correct, as e.g. a fully paralyzed and mute person can’t employ their knowledge, yet they still possess it.

™One way I can employ a textbook about Quantum Mechanics is by throwing it at you, for which any book would suffice, but I can’t put any of the knowledge represented within into practice.

Why can’t you put any of the knowledge represented in the book into practice? You can still pick the book up and extract the knowledge.

See how these are technically correct arguments, yet they are absolutely stupid?

Technically this is not correct, as e.g. a fully paralyzed and mute person can’t employ their knowledge, yet they still possess it.

You’d have to be past Hawkins levels of paralysis to not be able to employ that knowledge to come up with new physical theories. Now that was a nickpick.

You can still pick the book up and extract the knowledge.

That would be employing my knowledge of maths, of my general education, not of the QM knowledge represented in the book: I cannot employ the knowledge in the book to pick up the knowledge in the book because I haven’t picked it up yet. Causality and everything, it’s a thing.

I have no idea what you’re getting at, and I don’t think you’re writing in good faith. I’ll stop here. Have a good day!

Part of the problem with talking about these things in a casual setting is that nobody is using precise enough terminology to approach the issue so others can actually parse specifically what they’re trying to say.

Personally, saying the AI “knows” something implies a level of cognizance which I don’t think it possesses. LLMs “know” things the way an excel sheet can.

Obviously, if we’re instead saying the AI “knows” things due to it being able to frequently produce factual information when prompted, then yeah it knows a lot of stuff.

I always have the same feeling when people try to talk about aphantasia or having/not having an internal monologue.

Personally, saying the AI “knows” something implies a level of cognizance which I don’t think it possesses. LLMs “know” things the way an excel sheet can.

Yes and the Excel sheet knows. There’s been some stick up your ass CS folks in the past railing about “computers don’t know things, sorting algorithms don’t understand how to sort”, they’ve long since given up. They claimed that saying such things is representative of a bad understanding of how things work yet people casually employing that kind of language often code circles around people who don’t, fact of the matter is many people’s minds like to think of actor forces as animated. “If the light bridge is tripped the machine knows you’re there and stops because we taught it not to decapitate you”.

I can ask AI models specific questions about knowledge it has, which it can correctly reply to. Excel sheets can’t do that.

That’s not to say the knowledge is perfect - but we know that AI models contain partial world models. How do you differentiate that from “cognizance”?

Omg give me a break with this complete nonsense. LLMs are not an intelligence. They are language processors. They do not “think” about anything and don’t have any level of self awareness that implies cognizance. A cognizant ai would have recognized that the Nazis it was creating looked historically inaccurate, based on its training data. But guess what, it didn’t do that because it’s fundamentally incapable of thinking about anything.

So sick of reading this amateurish bullshit on social media.

This gets the question…how do we think? Are we not just language (and other inputs as well) processors? I’m not sure the answer is “no.”

I also listened to an interesting podcast, I believe it was this American life or some other npr one, about whether ai has intelligence. To avoid the just “compressed knowledge” they came up with questions that the ai almost certainly would not have found in the web. Early ai models were clearly just predicting the next word, and the example was asking it to stack a list of objects. And it just said to stack them one on top of another, in a way that would no way be stable.

However when they asked a new model to do the same, with the stipulation that it explain it’s reasoning, it stacked the objects in a way that would likely be stable. Even noting that the nail on top should be placed on the head so it doesn’t roll around, and laying eggs down in a grid between a book and a plank of wood so they wouldn’t roll out.

Another experiment they did was take a language model and asked it to use some obscure programming language to draw a picture of a unicorn. Now this is a language model, not trained on any images.

And you know what it did? It produced a picture of a unicorn. Just in rough shapes, but even when they moved the horn and flipped it around, it was able to put it back. Without even ever seeing a unicorn, or anything even, it was able to draw a picture of one.

I don’t think the answer is as simple and clear as you want it to be. And the fact that it “fucked up” on a vague prompt doesn’t really prove anything. Even humans do stupid shit like this if they learn something incorrectly.

A cognizant ai would have recognized that the Nazis it was creating looked historically inaccurate, based on its training data.

Do you understand that the model is specifically prompted to create “historically inaccurate looking Nazis”? Models aren’t supposed to inject their own guidelines and rules, they simply produce output for your input. If you tell it to produce black Hitler it will produce a black Hitler. Do you expect the model to instead produce white Hitler?

I think you might be confusing intelligence with memory. Memory is compressed knowledge, intelligence is the ability to decompress and interpret that knowledge.

You mean like create world representations from it?

https://arxiv.org/abs/2210.13382

Do these networks just memorize a collection of surface statistics, or do they rely on internal representations of the process that generates the sequences they see? We investigate this question by applying a variant of the GPT model to the task of predicting legal moves in a simple board game, Othello. Although the network has no a priori knowledge of the game or its rules, we uncover evidence of an emergent nonlinear internal representation of the board state.

(Though later research found this is actually a linear representation)

Or combine skills and concepts in unique ways?

https://arxiv.org/abs/2310.17567

Furthermore, simple probability calculations indicate that GPT-4’s reasonable performance on k=5 is suggestive of going beyond “stochastic parrot” behavior (Bender et al., 2021), i.e., it combines skills in ways that it had not seen during training.

No. On a fundamental level, the idea of “making connections between subjects” and applying already available knowledge to new topics is compression - representing more data with the same amount of storage. These are characteristics of intelligence, not of memory.

You can’t decompress something if you haven’t previously compressed the data.

Our current AI systems are T2, and T1 during interference. They can’t decide how they represent data that’d require T3 (like us) which puts them, in your terms, at the level of memory, not intelligence.

Actually it’s quite intuitive: Ask StableDiffusion to draw a picture of an accident and it will hallucinate just as wildly as if you ask a human to describe an accident they’ve witnessed ten minutes ago. It needs active engagement with that kind of memory to sort the wheat from the chaff.

They can’t decide how they represent data that’d require T3 (like us) which puts them, in your terms, at the level of memory, not intelligence.

Where do you get this? What kind of data requires a T3 system to be representable?

I don’t think I’ve made any claims that are related to T2 or T3 systems, and I haven’t defined “memory”, so I’m not sure how you’re trying to put it in my terms. I wouldn’t define memory as an adaptable system, so T2 would by my definition be intelligence as well.

Actually it’s quite intuitive: Ask StableDiffusion to draw a picture of an accident and it will hallucinate just as wildly as if you ask a human to describe an accident they’ve witnessed ten minutes ago. It needs active engagement with that kind of memory to sort the wheat from the chaff.

I just did this:

Where do you see “wild hallucination”? Yeah, it’s not perfect, but I also didn’t do any kind of tuning - no negative prompt, positive prompt is literally just “accident”.

Where do you get this? What kind of data requires a T3 system to be representable?

It’s not about the type of data but data organisation and operations thereon. I already gave you a link to Nikolic’ site feel free to read it in its entirety, this paper has a short and sweet information-theoretical argument.

I don’t think I’ve made any claims that are related to T2 or T3 systems, and I haven’t defined “memory”, so I’m not sure how you’re trying to put it in my terms.

I’m trying to map your fuzzy terms to something concrete.

I wouldn’t define memory as an adaptable system, so T2 would by my definition be intelligence as well.

My mattress is an adaptable system.

Where do you see “wild hallucination”?

All of it. Not in the AI but conventional term: Nothing of it ever happened, also, none of the details make sense. When humans are asked to recall an accident they witnessed they report like 10% fact (what they saw) and 90% bullshit (what their brain hallucinates to make sense of what happened). Just like human memory the AI is taking a bit of information and then combining it with wild speculation into something that looks plausible. But which, if reasoning is applied, quickly falls apart.

Knowledge is a bit more than just handling data, and in terms of intelligence it also involves understanding. I don’t think knowledge in an intelligent sense can be reduced to summarising data to keywords, and the reverse.

In those terms an encyclopaedia is also knowledge, but not in an intelligent way.

I’m not saying knowledge is summarising data to keywords, where did you get that?

Intelligence is compression, and the training process compresses data. There is no “summarising” here.

“Intelligence is compression” is it?

So are you going to like… explain how that makes any sense at all, or how to deal with the many, many obvious counterexamples?

Do I need to spell this out? Like… a ZIP algorithm is not what anyone would call “intelligent”. Nor is the ZIP archive.

Do you really think you can just say “intelligence is compression” and expect people to believe you?

I could gladly provide research that supports my position! But I don’t think you’re interested in that - you didn’t ask for an explanation or for evidence, instead you’re discounting the idea with snark, so I’ll save myself the time.

I’d like to see the research.

I’ll look it up once I’m off work and reply with another comment :)

Edit: with all the downvotes, I’m not interested in continuing this broader discussion. Lemmy isn’t a good place to talk about anything close to AI. So I won’t spend time to find resources, sorry!

Lemmy hasn’t met a pitchfork it doesn’t pick up.

You are correct. The most cited researcher in the space agrees with you. There’s been a half dozen papers over the past year replicating the finding that LLMs generate world models from the training data.

But that doesn’t matter. People love their confirmation bias.

Just look at how many people think it only predicts what word comes next, thinking it’s a Markov chain and completely unaware of how self-attention works in transformers.

The wisdom of the crowd is often idiocy.

Thank you very much. The confirmation bias is crazy - one guy is literally trying to tell me that AI generators don’t have knowledge because, when asking it for a picture of racially diverse Nazis, you get a picture of racially diverse Nazis. The facts don’t matter as long as you get to be angry about stupid AIs.

It’s hard to tell a difference between these people and Trump supporters sometimes.

It’s hard to tell a difference between these people and Trump supporters sometimes.

To me it feels a lot like when I was arguing against antivaxxers.

The same pattern of linking and explaining research but having it dismissed because it doesn’t line up with their gut feelings and whatever they read when “doing their own research” guided by that very confirmation bias.

The field is moving faster than any I’ve seen before, and even people working in it seem to be out of touch with the research side of things over the past year since GPT-4 was released.

A lot of outstanding assumptions have been proven wrong.

It’s a bit like the early 19th century in physics, where everyone assumed things that turned out wrong over a very short period where it all turned upside down.

Exactly. They have very strong feelings that they are right, and won’t be moved - not by arguments, research, evidence or anything else.

Just look at the guy telling me “they can’t reason!”. I asked whether they’d accept they are wrong if I provide a counter example, and they literally can’t say yes. Their world view won’t allow it. If I’m sure I’m right that no counter examples exist to my point, I’d gladly say “yes, a counter example would sway me”.

Yall actually have any research to share or just gonna talk about it?

Yall actually have any research to share or just gonna talk about it?

Jsyk I can’t see that comment from your link.

Would it be accurate so say that while current AI does have the knowledge, it lacks the reasoning skills needed to apply the knowledge correctly?

No, it can solve word problems that it’s never seen before with fairly intricate reasoning. LLMs can even play chess at Grandmaster levels without ever duplicating games in the training set.

Most of Lemmy has no genuine idea about the domain and hasn’t actually been following the research over the past year which invalidates the “common knowledge” on the topic you often see regurgitated.

For example, LLMs build world models from the training data, and can combine skills from the data in ways that haven’t been combined in the training data.

They do have shortcomings - being unable to identify what they don’t know is a key one.

But to be fair, apparently most people on Lemmy can’t do that either.

I don’t think it’s generally true, because current AI can solve some reasoning tasks very well. But it’s definitely something where they are lacking.

It isn’t reasoning about anything. A human did the reasoning at some point, and the LLM’s dataset includes that original information. The LLM is simply matching your prompt to that training data. It’s not doing anything else. It’s not thinking about the question you asked it. It’s a glorified keyword search.

It’s obvious you have no idea how LLMs work at a fundamental level, yet you keep talking about them like you’re an expert.

So if I find a single example of an AI doing a reasoning task that’s not in its training material, would you agree that you’re wrong and AI does reason?

You won’t find one. LLMs are literally incapable of the kind of reasoning you’re talking about. All of their solutions are based on training data, no matter how “original” your problem might seem.

You didn’t answer my question.

That’s fair, I have seen AI reason at a low level, but it seems to me that it is lacking higher levels of reasoning and context

It definitely is lacking for now, but the question is: are these differences in degrees, or fundamental differences? I haven’t seen research suggesting that it’s the latter so far.

You act like humans never fuck this up either.

If you ask a person to describe a Nazi soldier, they won’t accidentally think you said “racially diverse Nazi soldier”

Should have been specific. I meant the point that it sometimes does stupid shit in attempts to be inclusive.

However, if you tell someone “hey I want you to make racially diverse pictures. Don’t just draw white people all the time” and then you later come back and ask them to “draw a German soldier from 1943.” Can you really accuse them of not thinking if they draw racially diverse soldiers?

Yes. If I’m an artist and my boss says “hey I want you to try to include more racial diversity in your drawings” and then says “your next assignment is to draw some Nazi soldiers”, I can use my own implicit knowledge about Nazis to understand that my boss doesn’t want me to draw racially diverse Nazis. This is just further evidence that generative models are not true intelligences.

I can use my own implicit knowledge about Nazis to understand that my boss doesn’t want me to draw racially diverse Nazis.

I don’t even know how “implicit knowledge” applies here, but it sounds like you’re really just assuming that the previous order no longer applies. One could also assume that it still applies. I think the latter is actually the more reasonable assumption, assuming this all happens ins vacuum.

I just know that it I told one of my reports to add more diversity, and then they added diversity to pictures of nazis, but that’s not what I wanted, then I would take that as my fault, not accuse them of not thinking.

No, because anyone who knows what a Nazi is and trusts that the person giving them instructions is not insane can assume that the first directive is meant to be a general note for their future work and not to be applied to the second directive. If one wanted pictures of racially diverse Nazis, they would need to be more explicit.

the first directive is meant to be a general note for their future work and not to be applied to the second directive

This is the root question, which you just gloss over. Why? It’s a general note, why should one assume it doesn’t apply? You seem to be saying “it applies except when it doesn’t.” It would seem to be that the rational thing to do would be to assume that the general note applies unless you’re explicitly told otherwise, or there is some good reason to believe this wasn’t the intent.

Also, fyi, the request was for German soldiers, not nazis.

And don’t get me wrong, I agree with you that it should not generate black German soldiers from 1939 without being explicitly told to do so. But I think this is a problem with it’s directives rather than evidence that it’s not thinking.

It’s great seeing time and time again that no one really does understand these models and that their preconceived notions of what biases exist ends up shooting them in the foot. It truly shows that they don’t really understand how systematically problematic the underlying datasets are and the repurcussions of relying on them too heavily.

Its not an issue. Gemini can generate the apology for you.

Honestly pisses me off that so many real humans lack the contextual awareness to know that contextual awareness is a concept that does not even exist to LLMs.

A Washington Post investigation last year found that prompts like “a productive person” resulted in pictures of entirely white and almost entirely male figures, while a prompt for “a person at social services” uniformly produced what looked like people of color. It’s a continuation of trends that have appeared in search engines and other software systems.

This is honestly fascinating. It’s putting human biases on full display at a grand scale. It would be near-impossible to quantify racial biases across the internet with so much data to parse. But these LLMs ingest so much of it and simplify the data all down into simple sentences and images that it becomes very clear how common the unspoken biases we have are.

There’s a lot of learning to be done here and it would be sad to miss that opportunity.

How are you guys getting it to generate"persons". It simply says It’s against my GOGLE AI PRINCIPLE to generate images of people.

They actually neutered their AI on thursday, after this whole thing blew up.

So right now, everyone’s fucked because Google decided to make a complete mess of this.

Damn. It keeps saying sum dumb shit when asked for images now. I got here too kate :(

You can generate images of people just not actual real people. You cannot create an image in the likeness of a particular person but if you just put “people at work” it will generate images of humans.

It’s putting human biases on full display at a grand scale.

The skin color of people in images doesn’t matter that much.

The problem is these AI systems have more subtle biases, ones that aren’t easily revealed with simple prompts and amusing images, and these AIs are being put to work making decisions who knows where.

In India they’ve been used to determine whether people should be kept on or kicked off of programs like food assistance.

Well, humans are similar to pigs in the sense that they’ll always find the stinkiest pile of junk in the area and taste it before any alternative.

EDIT: That’s about popularity of “AI” today, and not some semantic expert systems like what they’d do with Lisp machines.

It’s putting human biases on full display at a grand scale.

Not human biases. Biases in the labeled data set. Those could sometimes correlate with human biases, but they could also not correlate.

But these LLMs ingest so much of it and simplify the data all down into simple sentences and images that it becomes very clear how common the unspoken biases we have are.

Not LLMs. The image generation models are diffusion models. The LLM only hooks into them to send over the prompt and return the generated image.

Not human biases. Biases in the labeled data set.

Who made the data set? Dogs? Pigeons?

If you train on Shutterstock and end up with a bias towards smiling, is that a human bias, or a stock photography bias?

Data can be biased in a number of ways, that don’t always reflect broader social biases, and even when they might appear to, the cause vs correlation regarding the parallel isn’t necessarily straightforward.

I mean “taking pictures of people who are smiling” is definitely a bias in our culture. How we collectively choose to record information is part of how we encode human biases.

I get what you’re saying in specific circumstances. Sure, a dataset that is built from a single source doesn’t make its biases universal. But these models were trained on a very wide range of sources. Wide enough to cover much of the data we’ve built a culture around.

Except these kinds of data driven biases can creep in from all sorts of ways.

Is there a bias in what images have labels and what don’t? Did they focus only on English labeling? Did they use a vision based model to add synthetic labels to unlabeled images, and if so did the labeling model introduce biases?

Just because the sampling is broad doesn’t mean the processes involved don’t introduce procedural bias distinct from social biases.

inclusivity is obviously good but what googles doing just seems all too corporate and plastic

It’s trying so hard to not be racist that is being even more racist than other AI, is hilarious

It’s brand new tech, they put on a bandaid solution, it wasn’t a complete solution and it failed. It’s not the result they ideally want and they are going to try to fix it. I don’t see what the big deal is. They were right to have diversity in mind, they just need to improve it to handle more use cases.

I guess users got so used to the last Gen of tech being more polished than it was when it first came out that they forgot that software has bugs.

No matter what Google does, people are going to come up with gotcha scenarios to complain about. People need to accept the fact that if you don’t specify what race you want, then the output might not contain the race you want. This seems like such a silly thing to be mad about.

It’s silly to point at brand new technology and not expect there to be flaws. But I think it’s totally fair game to point out the flaws and try to make it better, I don’t see why we should just accept technology at its current state and not try to improve it. I totally agree that nobody should be mad at this. We’re figuring it out, an issue was pointed out, and they’re trying to see if they can fix it. Nothing wrong with that part.

It’s really a failure of one-size-fits-all AI. There are plenty of non-diverse models out there, but Google has to find a single solution that always returns diverse college students, but never diverse Nazis.

If I were to use A1111 to make brown Nazis, it would be my own fault. If I use Google, it’s rightfully theirs.

The solution is going to take time. Software is made more robust by finding and fixing edge cases. There’s a lot of work to be done to find and fix these issues in AI, and it’s impossible to fix them all, but it can be made better. The end result will probably be a patchwork solution.

The issue seems to be the underlying code tells the ai if some data set has too many white people or men, Nazis, ancient Vikings, Popes, Rockwell paintings, etc then make them diverse races and genders.

What do we want from these AIs? Facts, even if they might be offensive? Or facts as we wish they would be for a nicer world?

No matter what Google does, people are going to come up with gotcha scenarios to complain about.

American using Gemini: “Please produce images of the KKK, historically accurate Santa’s Workshop Elves, and the board room of a 1950s auto company”

Also Americans: “AH!! AH!!! Minorities and Women!!! AAAAAHHH!!!”

I mean, idk, man. Why do you need AI to generate an image of George Washington when you have thousands of images of him already at your disposal?

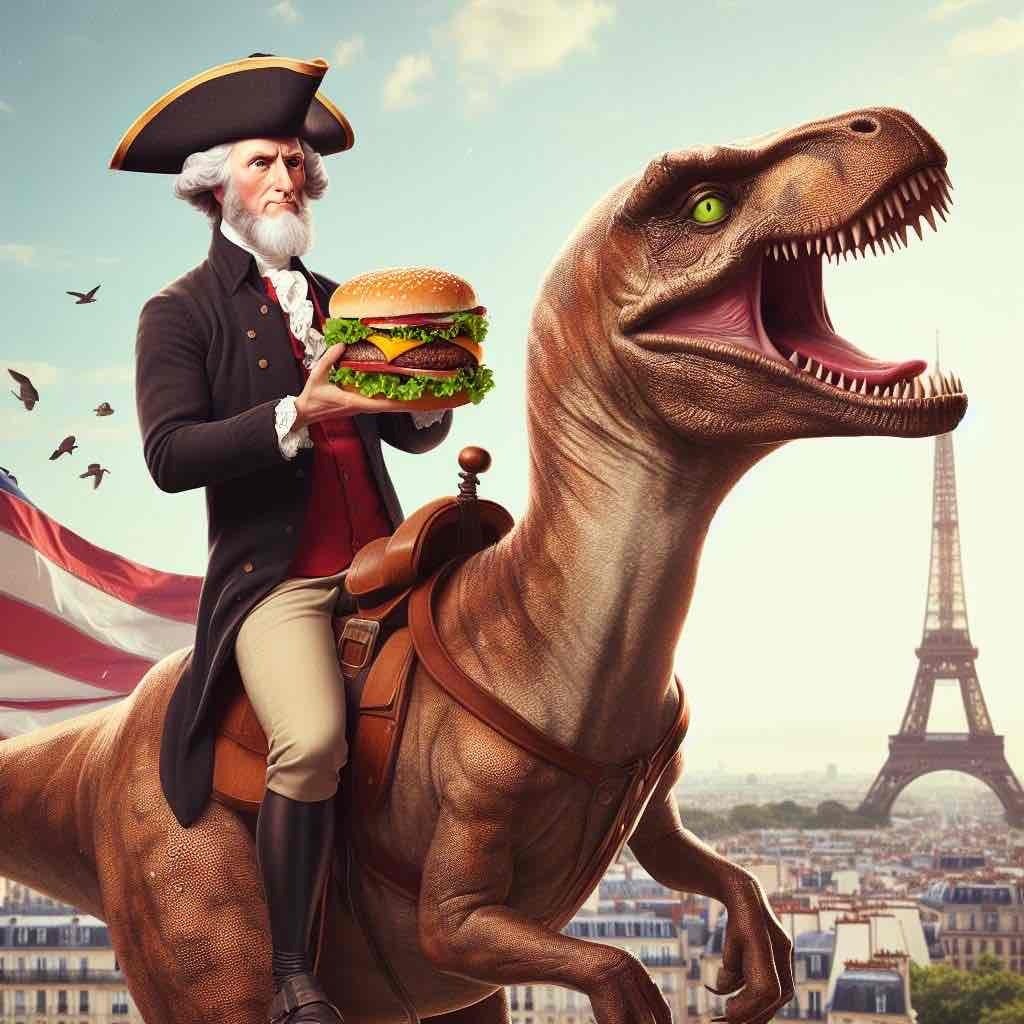

Because sometimes you want an image of George Washington, riding a dinosaur, while eating a cheeseburger, in Paris.

Which you actually can’t do on Bing anyway, since it ‘content warning’ stops you from generating anything with George Washington…

Ask it for a Founding Father though, it’ll even hand him a gat!

He’s not even eating the cheeseburger, crap AI.

An it’s not a beyond burger… it’s promoting the genocide of cattle.

Here’s one that was made, just for you, with specifically a VEGAN cheeseburger in the prompt :D

Excellent.

Funnily enough, he’s not eating one in the other three images either. He’s holding an M16 in one, with the dinosaur partially as a hamburger (?). In the other two he’s merely holding the burger.

I assume if I change the word order around a bit, I could get him to enjoy that burger :D

This is the thing. There’s an incredible number of inaccuracies in the picture, several of which flat out ignore the request in the prompt, and we laugh it off. But the AI makes his skin a little bit darker? Write the Washington Post! Historical accuracy! Outrage!

Well, the tech is of course still young. And there’s a distinct difference between:

A) User error: a prompt that isn’t as good as it can be, with the user understanding for example the ‘order of operations’ that the AI model likes to work in.

B) The tech flubbing things because it’s new and constantly in development

C) The owners behind the tech injecting their own modifiers into the AI model in order to get a more diverse result.

For example, in this case I understand the issue: the original prompt was ‘image of an American Founding Father riding a dinosaur, while eating a cheeseburger, in Paris.’ Doing it in one long sentence with several comma’s makes it harder for the AI to pin down the ‘main theme’ from my experience. Basically, it first thinks ‘George on a dinosaur’ with the burger and Paris as afterthoughts. But if you change the prompt around a bit to ‘An American Founding Father is eating a cheeseburger. He is riding on a dinosaur. In the background of the image, we see Paris, France.’, you end up with the correct result:

Basically the same input, but by simply swapping around the wording it got the correct result. Other ‘inaccuracies’ are of course to be expected, since I didn’t really specify anything for the AI to go of. I didn’t give it a timeframe for one, so it wouldn’t ‘know’ not to have the Eiffel Tower and a modern handgun in it. Or that that flag would be completely wrong.

The problem is with C) where you simply have no say in the modifiers that they inject into any prompt you send. Especially when the companies state that they are doing it on purpose so the AI will offer a more diverse result in general. You can write the best, most descriptive prompt and there will still be an unexpected outcome if it injects their modifiers in the right place of your prompt. That’s the issue.

C is just a work around for B and the fact that the technology has no way to identify and overcome harmful biases in its data set and model. This kind of behind the scenes prompt engineering isn’t even unique to diversifying image output, either. It’s a necessity to creating a product that is usable by the general consumer, at least until the technology evolves enough that it can incorporate those lessons directly into the model.

And so my point is, there’s a boatload of problems that stem from the fact that this is early technology and the solutions to those problems haven’t been fully developed yet. But while we are rightfully not upset that the system doesn’t understand that lettuce doesn’t go on the bottom of a burger, we’re for some reason wildly upset that it tries to give our fantasy quasi-historical figures darker skin.

The random lettuce between every layer is weirdly off-putting to me. It seems like it’s been growing on the burger for quite some time :D

Doesn’t look too bad to me. I love a fair bit of crispy lettuce on a burger. Doing it like that at least spreads it out a bit, rather than having a big chunk of lettuce.

Still, it that was my burger… I’d add another patty and extra cheese.

Honestly, this sort of thing is what’s killing any sort of enjoyment and progress of these platforms. Between the INCREDIBLY harsh censorship that they apply and injecting their own spin on things like this, it’s nigh on impossible to get a good result these days.

I want the tool to just do its fucking job. And if I specifically ask for a thing, just give me that. I don’t mind it injecting a bit of diversity in say, a crowd scene - but it’s also doing it in places where it’s simply not appropriate and not what I asked for.

It’s even more annoying that you can’t even PAY to get rid of these restrictions and filters. I’d gladly pay to use one if it didn’t censor any prompt to death…

I want the tool to just do its fucking job. And if I specifically ask for a thing, just give me that. I don’t mind it injecting a bit of diversity in say, a crowd scene - but it’s also doing it in places where it’s simply not appropriate and not what I asked for.

The thing is, if it’s injecting diversity into a place where there shouldn’t have been diversity, this can usually be fixed by specifying better in the next prompt. Not by writing ragebait articles about it.

But yeah, I’d also be happy to be able to use an unhinged LLM once in a while.

Taking responsibility of how I use the tools that I use? How dare you.

Yeah, this is what people don’t get. These LLMs aren’t thinking about anything. It has zero awareness. If you don’t guide it towards exactly what you want in your prompt, it’s not going to magically know better.

Speaking for myself, it’s definitely not the lack of detail in the prompts. I’m a professional writer with an excellent vocabulary. I frequently run out of room with the prompts on Bing, because I like to paint a vivid picture.

The problems arise when you use words that it either flags as problematic, misinterprets anyway or if it just injects its own modifiers. For example, I’ve had prompts with ‘black haired’ rejected on Bing, because… god knows why. Maybe it didn’t like what it generated as it was problematic. But if I use ‘raven-haired’ I get a good result.

I don’t mind tweaking prompts to get a good result. That’s part of the fun. But when it just tells you ‘NO’ without explanation, that’s annoying. I’d much prefer an AI with no censorship. At least that way I know a poor result is due to a poor prompt.

Who says you need awareness to think? People process information subconsciously all the time.

I couldn’t agree more. I recently read an article that criticized “uncensored AI” for that it was capable of coming up with a plan for a nazi takeover of the world or something similar. Well duh, if that’s what you asked for then it should. If it truly is uncensored then it should be capable of plotting a similar takeover for gay furries too as well as also counter-measures for both of those plans.

This points at a very crucial and deep divide in people’s social philosophy, which is how to ensure bad things are minimized.

One major branch of this theory goes like:

Make sure people are good people, and punish those who do wrong

And the other major branch goes like:

Make sure people don’t have the power needed to do wrong

Very deep, very serious divide in our zeitgeist, and we never talk about it directly but I think we really should.

(Or maybe we shouldn’t, because the conversation could be dangerous in the wrong hands)

I’m in the former camp. I think people should have power, even if it enables them to do bad things.

Just run ollama locally and download uncensored versions— runs on my m1 MacBook no problem and is at the very least comparable to chatgpt3. Unsure for images though, but there should be some open source options. Data is king here, so the more you use a platform the better its AI gets (generally) so don’t give the corporations the business.

I’ve never even heard of that, so I’m definitely going to check that out :D I’d much prefer running my own stuff rather than sending my prompts to god knows where. Big tech already knows way yoo much about us anyway.

I love teaching GPT-4. I’ve given permission for them to use my conversations with it as part of future training data, so I’m confident what I teach it will be taken up.

How powerful is ollama compared to say GPT-4?

I’ve heard GPT-4 uses an enormous amount of energy to answer each prompt. Are the models runnable on personal equipment once they’re trained?

I’d love to have an uncensored AI

Llama2 is pretty good but there are a ton of different models which have different pros and cons, you can see some of them here: https://ollama.com/library . However I would say that as a whole models are generally slightly less polished compared to chat gpt.

To put it another way: when things are good they’re just as good, but when things are bad the AI will start going off the rails, for instance holding both sides on the conversation, refusing to answer, just saying goodbye, etc. More “wild westy” but you can also save the chats and go back to them so there are ways to mitigate, and things are only getting better.

I want the tool to just do its fucking job.

Download ComfyUI, download a model (I’d say head over to civitai), have a blast. The only censorship you’ll see on the way is civitai hiding anything sexually explicit unless you have an account, the site becomes a lot more horny when if you flip the switch in the settings.

I’ll look into it for sure. I tried Automatic1111 last year with SD, bunch of add-on stuff… it was finicky and didn’t get me quite what I was looking for.

Thanks for the tip!

Some stuff will always be finickly and fickle: The more you and the model disagree with what a very basic prompt means the more work it is to get it to do what you want – and it might not be able to, OTOH poking around will then likely inspire you to do something else that seems possible, AI as a medium is quite a bit more of a dialogue than oil on canvas: Once you’ve mastered oil it becomes passive, not talking back any more, while AI models will continue to brat back.

That said though ComfyUI gives you a ton more control than A1111, it’s also generally faster and more performant.

And, by establishing legal precedent that AIs can’t be trained on copyrighted content without purchasing licenses as if the content were going to be redistributed, we’ve ensured that people who aren’t backed by millions of dollars won’t be able to build their own AIs.

Why would anyone expect “nuance” from a generative AI? It doesn’t have nuance, it’s not an AGI, it doesn’t have EQ or sociological knowledge. This is like that complaint about LLMs being “warlike” when they were quizzed about military scenarios. It’s like getting upset that the clunking of your photocopier clashes with the peaceful picture you asked it to copy

I’m pretty sure it’s generating racially diverse nazis due to companies tinkering with the prompts under the hood to counterweight biases in the training data. A naive implementation of generative AI wouldn’t output black or Asian nazis.

it doesn’t have EQ or sociological knowledge.

It sort of does (in a poor way), but they call it bias and tries to dampen it.

I don’t disagree. The article complained about the lack of nuance in generating responses and I was responding to the ability of LLMs and Generative AI to exhibit that. Your points about bias I agree with

At the moment AI is basically just a complicated kind of echo. It is fed data and it parrots it back to you with quite extensive modifications, but it’s still the original data deep down.

At some point that won’t be true and it will be a proper intelligence. But we’re not there yet.

Nah, the problem here is literally that they would edit your prompt and add “of diverse races” to it before handing it to the black box, since the black box itself tends to reflect the built-in biases of training data and produce black prisoners and white scientists by itself.

I pretty much agree with that

Why shouldn’t we expect more and better out of the technologies that we use? Seems like a very reactionary way of looking at the world

I DO expect better use from new technologies. I don’t expect technologies to do things that they cannot. I’m not saying it’s unreasonable to expect better technology I’m saying that expecting human qualities from an LLM is a category error

Now that shit is funny. I hope more people take more time to laugh at companies scrambling to pour billions into projects they don’t understand.

Laugh while it’s still funny, anyway.

Horror is the naked moment between one type of laugher and the other

If the black Scottish man post is anything to go by, someone will come in explaining how this is totally fine because there might’ve been a black Nazi somewhere, once.

Kanye?

Someone needs to edit this to feature Kanye

:format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/25298169/1000001251.png)

Looks like they scrubbed swastikas out of the training set? I have mixed feelings about this. Like if they want something to have historical accuracy or my own personal opinions on censorship that shouldn’t be scrubbed. But also this is the perfect tool to churn out endless amounts of pro nazi propaganda so maybe it’s safer to keep it removed?

I wonder if it’s just a hard shape to get right, like hands.

Isn’t there an entire subreddit of humans who can’t get it right? I think we’re starting to see considerable overlap between the intelligence of the smartest AI and the dumbest humans.

Probably. Image generators still have a bit of trouble with signs and iconography. A swastika probably falls into a similar category.

Hey! If Demoman catches you talkin’ anymore shit like that he’s gonna turn the lot of us into a fine red spray!

Well there’s that video of those black Israelites hasseling that Jewish dude. They looked like bums tho.

Ah, the Battlefield 5 experience

Kanye has entered the chat.

“Especially” 💀

Who exactly are they apologizing to? Is it the Nazis?

They didn’t apologize. Headlines just say they did.

It’s okay when Disney does it. What a world. Poor AI, how are they supposed to learn if all its data is created by mentally ill and crazy people. ٩(。•́‿•̀。)۶

WDYM?

Only their new SW trilogy comes to mind, but in SW racism among humans was something limited to very backwards (savage by SW standards) planets, racism of humans towards other spacefaring races and vice versa was more of an issue, so a villain of any kind of human race is normal there.

It’s rather the purely cinematographic part which clearly made skin color more notable for whichever reason, and there would be some racists among viewers.

Probably they knew they can’t reach the quality level of OT and PT, so made such things intentionally during production so that they could later complain about fans being racist.

Have you read the article? It was about misrepresenting historical figures, racism was just a small part.

It was about favoring diversity, even if it’s historically inaccurate or even impossible. Something Disney is very good at.

I have, I was asking about Disney reference only.

Are you referring to the little mermaid? If so, get tf over yourself… it’s literally a fictional children’s story.

Do you have examples?

Oh no, not racial impurity in my Nazi fanart generator! /s

Maybe you shouldn’t use a plagiarism engine to generate Nazi fanart. Thanks

This could make for some hilarious, alternate history satire or something. I could totally see Key and Peele heading a group of racially diverse nazis ironically preaching racial purity and attempting to take over the world.

Dave Chappelle did that with a blind black man that joined the Klan (back in the day before he went off the deep end)